Morgan Stanley's Latest Semiconductor Report: AI Computing Power Cycle is Expanding to Storage and Packaging

On March 5, 2026, Morgan Stanley released its research report on the Asian semiconductor industry:

Greater China Semiconductors – Bullish on Cloud, Memory and Optical Outlook; Accumulating Ahead of GTC.

The report points out that the core driver of the semiconductor industry remains the construction of AI infrastructure, but market focus is shifting.

While the 2023–2024 AI cycle focused primarily on GPUs, entering 2025–2026, AI-driven demand will begin to diffuse throughout the broader semiconductor supply chain, including:

Memory

Advanced Packaging

Custom ASIC chips

Datacenter networking

Morgan Stanley’s conclusion is:

AI compute investment is still in the expansion stage, and the semiconductor industry is entering a new structural demand cycle.

I. Cloud Vendors’ Capex Still Expanding

The core driver of AI semiconductor demand remains the cloud providers.

Morgan Stanley’s statistics show:

In Q4 2025, capital expenditures by the world’s top four cloud vendors (Amazon, Microsoft, Google, Meta) grew year-over-year by 64%.

Expanding to the world’s top ten cloud vendors, Morgan Stanley projects:

Global cloud capital expenditure will approach $685 billion by 2026.

In longer-term forecasts, NVIDIA CEO Jensen Huang has stated:

Global AI infrastructure investments may reach $1 trillion before 2028.

This trend means:

AI infrastructure construction is still in a growth cycle, not at the market peak as some fear.

II. AI Inference Is Changing Memory Demand Structure

Morgan Stanley believes that, in this AI cycle, the most underestimated part ismemory demand.

AI inference models require large amounts of context memory to be stored,

thus driving demand for new memory architectures.

The report introduces a concept:

ICMS (Inference Context Memory Storage)

which is a context storage system specifically for AI inference.

According to Morgan Stanley’s calculations:

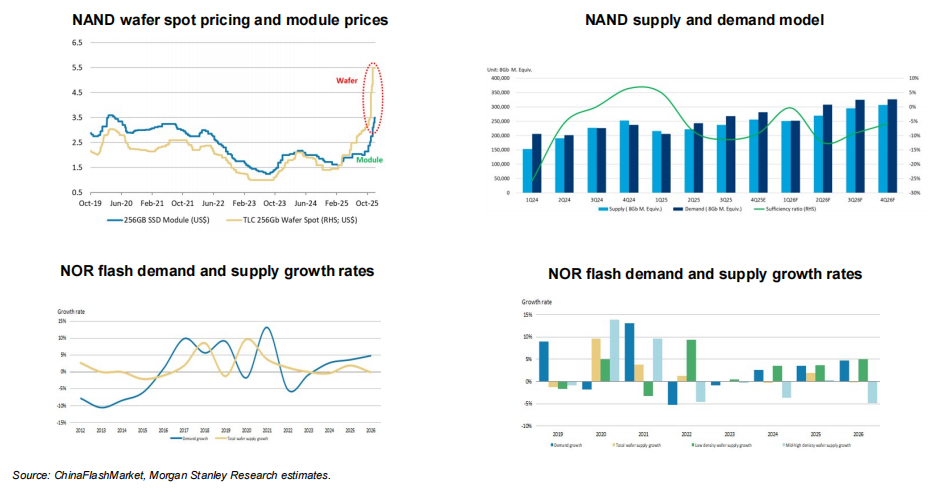

By 2027, AI inference demand will increase global NAND memory demand by an additional 13%.

Meanwhile, the NOR Flash market might also enter a supply-tight cycle.

The report believes:

AI-related memory demand could trigger a new upcycle in the memory industry.

III. HBM as the Key Bottleneck in AI Compute Power

A core factor in improving AI chip performance is High Bandwidth Memory (HBM).

Morgan Stanley estimates:

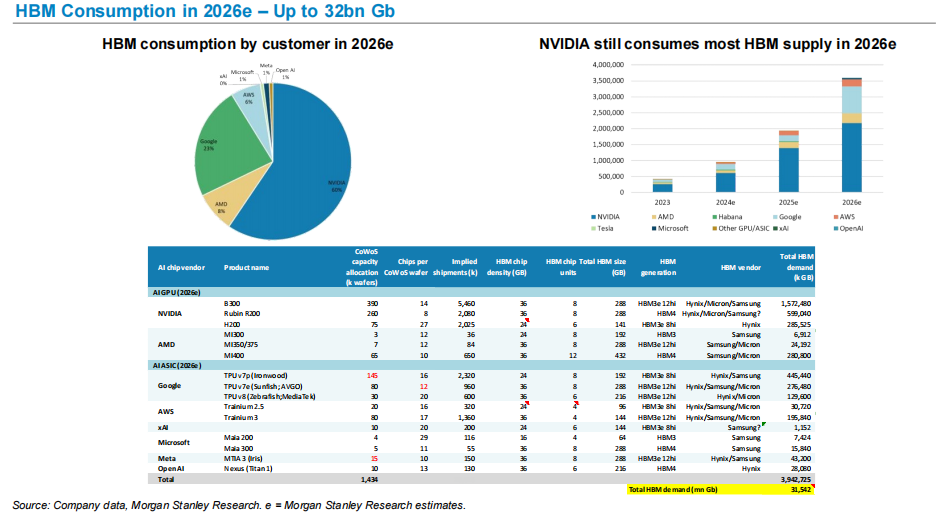

By 2026, global HBM demand may reach about 32 billion Gb.

In terms of demand structure:

NVIDIA remains the largest consumer of HBM.

The rapidly growing HBM demand from both AI GPUs and AI ASIC chips makes HBM a key resource in the AI compute supply chain.

This trend also explains why:

SK Hynix

Micron

Samsung

are performing impressively during the AI cycle.

IV. Advanced Packaging Becomes the Bottleneck for AI Chip Production

AI GPUs require not just advanced processes but also heavily depend on advanced packaging.

Morgan Stanley expects:

TSMC’s CoWoS advanced packaging capacity may expand to 125k wafers per month by 2026.

Key demand drivers include:

NVIDIA

AMD

Self-developed AI chips by leading cloud vendors

Thus, advanced packaging has become a critical bottleneck in the AI chip supply chain.

V. AI ASIC Is Rapidly Rising

Beyond GPUs, cloud vendors are massively developing self-designed AI chips.

The main projects currently include:

Google TPU

Amazon Trainium

Microsoft Maia

Meta MTIA

Morgan Stanley forecasts that AI ASIC shipments will continue to grow in the coming years.

For example:

Shipments of the AWS Trainium chip series are expected to keep climbing in the next few years.

This means the AI compute market will present:

A coexistence of GPU + ASIC development.

VI. Chinese AI GPU Substitution Is Advancing

The report also makes projections on China’s AI chip industry.

Morgan Stanley expects:

China’s GPU self-sufficiency rate will rise from 34% in 2024 to 50% by 2027.

At the same time, China’s AI cloud market size is projected to reach:

about $48 billion by 2027.

This indicates a certain degree of regionalization in the global AI compute industry chain.

My Understanding

If the entire report were to be summed up in one sentence, it would be quite simple:

The AI semiconductor cycle is spreading from "compute power" to "the whole supply chain."

Early market focus was on GPUs.

But as the scale of AI infrastructure grows, demand is now spreading to:

Memory

Advanced Packaging

Networking chips

Custom ASICs

This means:

AI is no longer a single chip cycle, but a structural demand cycle for the entire semiconductor supply chain.

For the semiconductor industry,

the real transformation is not GPU demand, but ratherthe long-term construction of computing infrastructure.

Disclaimer: The content of this article solely reflects the author's opinion and does not represent the platform in any capacity. This article is not intended to serve as a reference for making investment decisions.

You may also like

USD/CAD Price Forecast: Hand holds 20-day EMA amid US-Iran war

Forex Today: Demand for safe-haven assets persists amid escalating Middle East tensions

GBP: Sterling gains advantage due to positioning – ING

Revolve’s High-End Strategy: Evaluating Market Penetration and Growth Potential Amidst a Decelerating Industry