Scaling next generation AI is making it riskier, not better

Opinion by: Mohammed Marikar, co-founder at Neem Capital

Artificial intelligence has consistently been defined by scale, so far — bigger models, faster processing, expanding data centers. The assumption, based on traditional technology cycles, was that scale would keep improving performance and, over time, costs would fall and access would expand.

That assumption is now breaking down. AI is not scaling like other software. Instead, it is capital-intensive, constrained by physical limits, and hitting diminishing returns far earlier than expected.

The numbers make this clear. Electricity demand from global data centers will more than double by 2030 — levels once associated with entire industrial sectors. In the US alone, data center power demand is projected to rise well over 100 percent before the decade ends. This expansion is demanding trillions of dollars in new investment alongside major expansions in grid capacity.

Meanwhile, these systems are being embedded into law, finance, compliance, trading and risk management, where errors propagate quickly but credibility is non-negotiable. In June 2025, the UK High Court warned lawyers to immediately stop submitting filings that cited fabricated case law generated by AI tools.

The scaling AI debate

When an AI system can invent a precedent that never existed, and a professional relies on it, debates about scaling start becoming serious questions of public trust. Scaling is amplifying AI’s weaknesses rather than solving them.

Part of the problem lies in what scale actually improves. Large language models (LLMs) are evolving to become increasingly fluent because language is pattern-based. The more examples an LLM sees of how real people write, summarize and translate, the faster it improves.

Deeper intelligence — reasoning — does not scale the same way. The next generation of AI must understand cause and effect and know when an answer is uncertain or incomplete. It will need to explain why a conclusion follows, not simply produce a confident response. This does not reliably improve with more parameters or more compute.

The consequence is a growing verification burden. Humans must spend more time checking machine output rather than acting on it, and that burden builds as systems are deployed more widely.

The cost of training AI models

Training frontier AI models has already become extraordinarily expensive, with credible tracking suggesting costs have been multiplying year over year, and projections that single training runs could soon exceed $1 billion. Training is only the entry cost.

The larger expense is inference: running these models continuously, at scale, with real latency, uptime and verification requirements. Every query consumes energy. Every deployment requires infrastructure. As usage grows, energy use and costs compound.

In terms of markets and crypto, AI systems are increasingly used to monitor onchain activity, analyze sentiment, generate codes for smart contracts, flag suspicious transactions and automate decisions.

In such a fast-moving, competitive environment, fluent but unreliable AI propagates errors quickly; false signals move capital, and fabricated explanations and hallucinations undermine trust. One example of this is false positives being generated in automated Anti-Money Laundering (AML) flagging, a common issue that wastes time and resources on investigating innocent trading activity.

Time to improve reasoning

Scaling AI systems without improving their reasoning amplifies risk, especially in use cases where automation and credibility are vital and tightly coupled.

Ensuring AI is economically viable and socially valuable means we cannot rely on scaling. The dominant approach today prioritizes increasing compute and data while leaving the underlying reasoning machinery largely unchanged, a strategy that is becoming more expensive without becoming proportionally safer.

Related: Crypto dev launches website for agentic AI to ‘rent a human’

The alternative is architectural. Systems need to do more than predict the next word. They need to represent relationships, apply rules, check their own steps and make it possible to see how conclusions were reached.

This is where cognitive or neurosymbolic systems come into play. By organizing knowledge into interrelated concepts, rather than relying solely on brute-force pattern matching, these systems can deliver high reasoning capability with far lower energy and infrastructure demands.

Emerging "cognitive AI" platforms are demonstrating how structured reasoning systems can operate on local servers or edge devices, allowing users to keep control over their own knowledge rather than outsourcing cognition to distant infrastructure.

Cognitive AI systems are harder to design and can underperform on open-ended tasks, but when reasoning is reusable in this way rather than rederived from scratch through massive compute, costs fall and verification becomes tractable.

Control over how AI is built matters as much as how it reasons. Communities need systems they can shape, audit and deploy without waiting for permission from centralized platform owners.

Some platforms are exploring this frontier by using blockchain to enable both individuals and corporations to contribute data, models and computing resources. By decentralizing AI development itself, these approaches reduce concentration risk and align deployment with local needs rather than global demands.

AI faces an inflection point. When reasoning can be reused rather than rediscovered through massive pattern matching, systems require less compute per decision and impose a smaller verification burden on humans. That shifts the economics. Experimentation becomes cheaper, inference becomes more predictable. Scaling no longer depends on exponential increases in infrastructure.

Scaling has already done what it could. What it has exposed, just as clearly, is the limit relying on size alone. The question now is whether the industry keeps pushing scale or starts investing in architectures that make intelligence reliable before making it bigger.

Opinion by: Mohammed Marikar, co-founder at Neem Capital.

Disclaimer: The content of this article solely reflects the author's opinion and does not represent the platform in any capacity. This article is not intended to serve as a reference for making investment decisions.

You may also like

Gold price to accelerate and hit new record highs after this event – technical analyst

Goldman’s Flood Anticipates Possibility of a ‘Dramatic’ Surge in Equities

EUR/GBP: Potential for a corrective rebound - ING

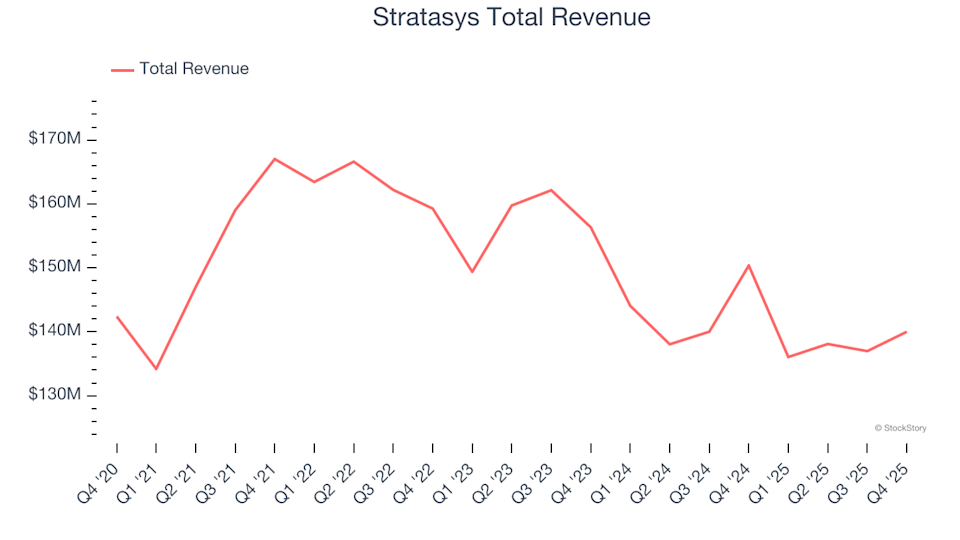

Unveiling Q4 Results: How Stratasys (NASDAQ:SSYS) Compares With Other Industrial Machinery Shares